Creating better online multiple choice questions

In this blog post we explore good practices around creating online computing questions, specifically multiple choice questions (MCQs). Multiple choice questions are a popular way to help teachers and learners work out the next steps in learning, and to assess learning in examinations. As a case study, we look at some data related to learner responses to computing questions on the Oak National Academy platform.

The case study illustrates the many things MCQ authors have to think about while designing questions, and that there is much more research needed to understand how to get an MCQ “just right”.

Uses of multiple choice questions

Online auto-marked MCQs are now being integrated into classroom activities, set as homework, and used in self-led learning at home. Software products involving MCQs, such as Kahoot and Socratic, are easy to use for many, and have become popular in some learning contexts. MCQ may have become more prevalent due to increased online teaching and the availability of whole curricula through platforms such as the Oak National Academy.

An international group of researchers from China, Spain, Singapore, and the UK recently looked into the reasons why MCQ-based testing might improve learning. Chunliang Yang and his co-authors concluded that there are three main ways that MCQ tests help learners learn:

- They provide learners with additional exposure to learning content

- They provide learners with content in the same format that they will be later assessed in

- They motivate learners, e.g. to prompt them to commit more effort to learn in general

What does the research say about creating multiple choice questions?

In recent research reviewing the use of MCQs, Andrew Butler from Washington University in St Louis looked at the effectiveness of MCQs in relation to learning, rather than assessment. Andrew gives the following advice for educators creating MCQs for learning:

- Think about the thinking processes the learner will use when answering the question, and make sure the processes are productive for their learning

- Don’t make the question super easy or too difficult, but make it challenging — the difficulty needs to be “just right”

- Keep the phrasing of the question simple

- Ensure that all answers are plausible; providing three or four answers is usually a good idea

- Be aware that if learners pick the wrong answer, this can reinforce the wrong thinking

- Provide corrective feedback to learners who pick the wrong answer

What I find particularly interesting about Andrew’s advice is the need to make the difficulty of the MCQ “just right” for learners. But what does “just right” look like in practice? More research is needed to work this out.

The anatomy of a multiple choice question

When talking about MCQs, there are technical terms to describe question features, e.g.:

- Incorrect answers are called distractors (or lures)

- A distractor is defined as plausible if it’s an answer a layperson would see as a reasonable answer

- Plausible distractors are called working distractors

Here at the Foundation, we created MCQs for the Oak National Academy when we adapted our Teach Computing Curriculum classroom materials into video lessons and accompanying home learning content to support learners and teachers during school closures. Data about what questions are attempted on the Oak platform, and what answer options are chosen, is stored securely by Oak National Academy. The Oak team kindly provided us with four months of anonymous data related to responses to the MCQs in the ‘GCSE Computer Science – Data representations’ unit.

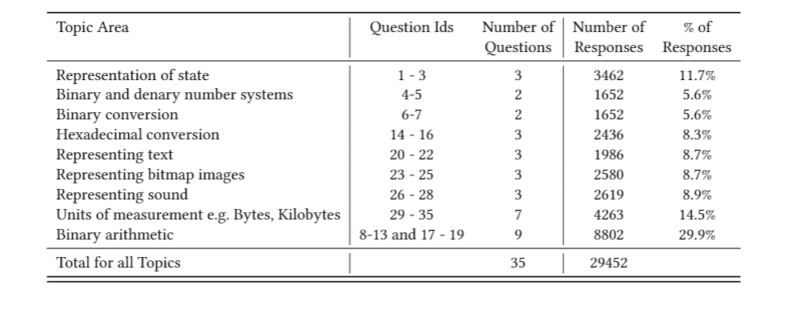

Over this period of four months, learners on the platform made more than 29,000 question attempts on the thirty-five questions across the nine lessons that make up this data representation unit. Here is a breakdown of the questions by topic area:

As shown in the table, more questions relate to binary arithmetic than to any other topic area. This was a specific design decision, as it is well-known that learners need lots of practice of the processes involved in answering binary arithmetic questions.

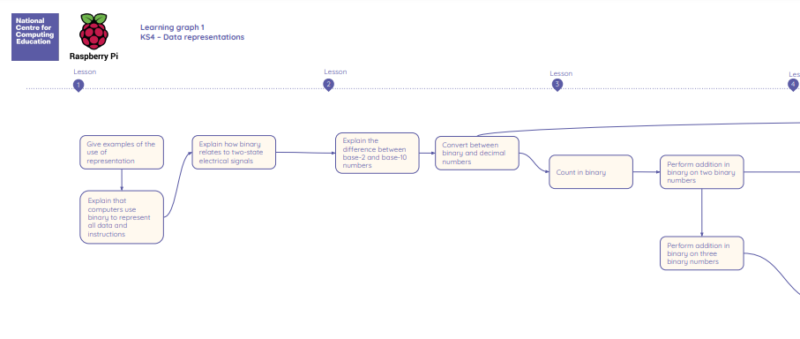

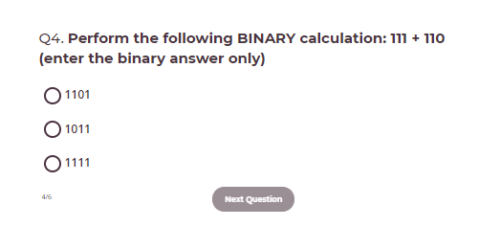

Let’s look at an example question from the binary arithmetic topic area, with one correct answer and two distractors. The learning objective being addressed with this question is ‘Perform addition in binary on two binary numbers’.

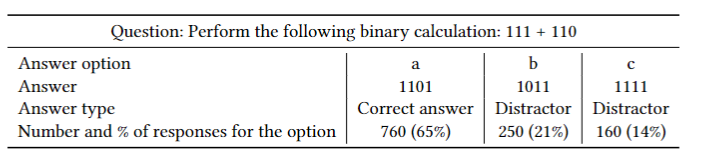

As shown in the table below, in four months, 1170 attempts were made to answer the example question. 65% of the attempts were correct responses, and 35% were not, with 21% of responses being distractor b, and 14% distractor c. These distractors appear to be working distractors, as they were chosen by more than 5% of learners, which has been suggested as a rule-of-thumb threshold that distractors have to clear to be classed as working.

However, because of the lack of research into MCQs, we cannot say for certain that this question is “just right” — it may be too hard. We need to do further research to find this out.

Creating multiple choice questions is not easy

The process of creating good MCQs is not an easy task, because question authors need to think about many things, including:

- What learning objectives are to be addressed

- What plausible distractors can be used

- What level of difficulty is right for learners

- What type of thinking the questions are encouraging, and how this is useful for learners

In order for MCQs to be useful for learners and teachers, much more research is needed in this area to show how to reliably produce MCQs that are “just right” and encourage productive thinking processes. We are very much looking forward to looking at this topic in our research work.

To find out more about the computing education research we are doing, you can browse our website, take part in our monthly seminars, and read our publications.

3 comments

James Carroll

I still believe the best solution for learning is asking questions and having students answer them. Multiple choice is what we called “multiple guess.” I loved those tests because they didn’t require my full understanding. I get that in online situations it’s easier to just offer choices and it’s certainly MUCH easier to check answers when multiple choice is used.

Administrasi Kurikulum

i just remember when i when school 9 yers ago in indonesia .. no edtech..

Stuart Andrew Jones

MCQs are widely used not only in courseware but in examinations for professional certification and qualification for educational opportunity (e.g the SAT and ACT examinations). Proper design of such questions is important, but in courseware providing feedback as well as additional education material in response to incorrect answers is far more critical, inmy opinion. I believe also that another option besides the answers shoul be included in certain of these questions: an ‘I don’t understand’ choice, that leads the learner to additional exposition. Education via computer is clearly going to become a key technique in preparing learners of all ages for upcoming life challenges. Our favorite single board computer looks like the great educational game changer, as a platform for computer-aided education that is affordable, superbly supported, and readily available.