-

Beyond content: helping teachers feel ready to teach AI

Catarina Marques from TUMO Portugal shares educators' key learnings from Experience AI programme.

-

Start small, dream big with Code Club: Become an Incubator Partner

Join us to establish and grow free Code Clubs in communities across the globe

-

AI is not neutral: What recent research says about bias, identity, and power

Computational methods are not objective — here's how to address this in the classroom

-

A day of big ideas at Coolest Projects USA Minnesota 2026

Celebrating young tech creators at Coolest Projects Minnesota 2026.

-

Why localisation matters for AI literacy: Lessons from Uzbekistan

Learn how Experience AI is building AI literacy in Uzbekistan.

-

Professional development: How to stay ahead in a fast-changing subject

Practical tips for effective professional development for computing teachers

-

What does ‘thinking’ mean now?

Dr Shuchi Grover on the need to emphasise critical thinking in CS and AI education

-

An astronomical anniversary: Young people’s code heads to the International Space Station

25,707 young people’s code runs on ISS for Astro Pi 2025/26 success

-

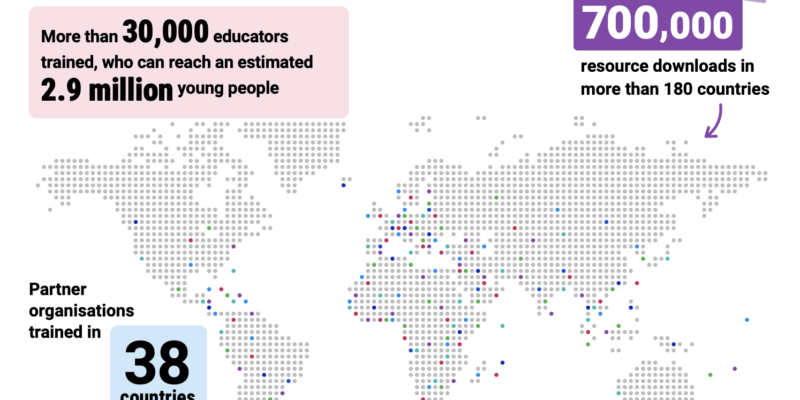

Experience AI: Reaching millions of young people with AI literacy

Experience AI builds knowledge and confidence to engage with AI

-

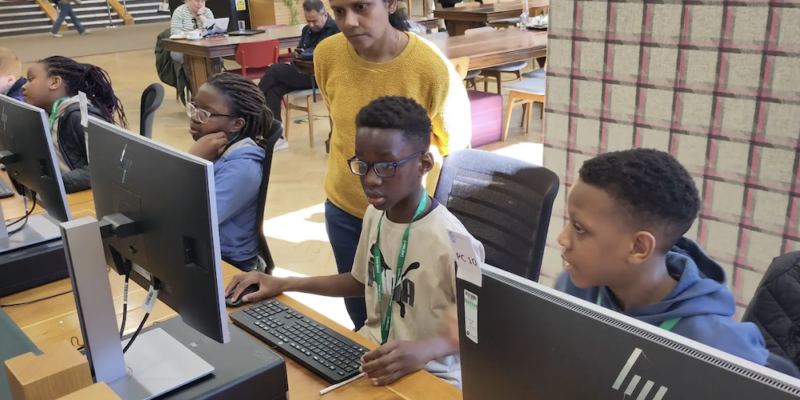

Many paths into mentoring: Building inclusive Code Clubs in Glasgow

Glasgow Code Clubs build inclusive spaces for youth, confidence and volunteers.

-

Experience CS: The complete set of units is live

Experience CS complete: 18 free cross-curricular CS units for grades 3–8

-

Highlights from Astro Pi 2025–2026 community events

Astro Pi 2025–26 events inspire youth coding through workshops and space labs