What does AI mean for computing education?

It’s been less than a year since ChatGPT catapulted generative artificial intelligence (AI) into mainstream public consciousness, reigniting the debate about the role that these powerful new technologies will play in all of our futures.

‘Will AI save or destroy humanity?’ might seem like an extreme title for a podcast, particularly if you’ve played with these products and enjoyed some of their obvious limitations. The reality is that we are still at the foothills of what AI technology can achieve (think World Wide Web in the 1990s), and lots of credible people are predicting an astonishing pace of progress over the next few years, promising the radical transformation of almost every aspect of our lives. Comparisons with the Industrial Revolution abound.

At the same time, there are those saying it’s all moving too fast; that regulation isn’t keeping pace with innovation. One of the UK’s leading AI entrepreneurs, Mustafa Suleyman, said recently: “If you don’t start from a position of fear, you probably aren’t paying attention.”

What does all this mean for education, and particularly for computing education? Is there any point trying to teach children about AI when it is all changing so fast? Does anyone need to learn to code anymore? Will teachers be replaced by chatbots? Is assessment as we know it broken?

If we’re going to seriously engage with these questions, we need to understand that we’re talking about three different things:

- AI literacy: What it is and how we teach it

- Rethinking computer science (and possibly some other subjects)

- Enhancing teaching and learning through AI-powered technologies

AI literacy: What it is and how we teach it

For young people to thrive in a world that is being transformed by AI systems, they need to understand these technologies and the role they could play in their lives.

The first problem is defining what AI literacy actually means. What are the concepts, knowledge, and skills that it would be useful for a young person to learn?

The reality is that — with a few notable exceptions — the vast majority of AI literacy resources available today are probably doing more harm than good.

In the past couple of years there has been a huge explosion in resources that claim to help young people develop AI literacy. Our research team mapped and categorised over 500 resources, and undertook a systematic literature review to understand what research has been done on K–12 AI classroom interventions (spoiler: not much).

The reality is that — with a few notable exceptions — the vast majority of AI literacy resources available today are probably doing more harm than good. For example, in an attempt to be accessible and fun, many materials anthropomorphise AI systems, using human terms to describe them and their functions and thereby perpetuating misconceptions about what AI systems are and how they work.

What emerged from this work at the Raspberry Pi Foundation is the SEAME model, which articulates the concepts, knowledge, and skills that are essential ingredients of any AI literacy curriculum. It separates out the social and ethical, application, model, and engine levels of AI systems — all of which are important — and gets specific about age-appropriate learning outcomes for each.

This research has formed the basis of Experience AI (experience-ai.org), a suite of resources, lessons plans, videos, and interactive learning experiences created by the Raspberry Pi Foundation in partnership with Google DeepMind, which is already being used in thousands of classrooms.

If we’re serious about AI literacy for young people, we have to get serious about AI literacy for teachers.

Defining AI literacy and developing resources is part of the challenge, but that doesn’t solve the problem of how we get them into the hands and minds of every young person. This will require policy change. We need governments and education system leaders to grasp that a foundational understanding of AI technologies is essential for creating economic opportunity, ensuring that young people have the mindsets to engage positively with technological change, and avoiding a widening of the digital divide. We’ve messed this up before with digital skills. Let’s not do it again.

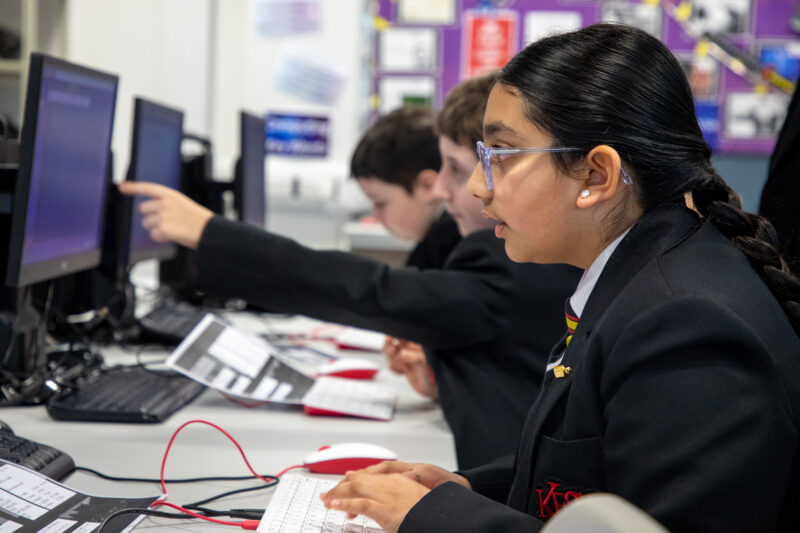

More than anything, we need to invest in teachers and their professional development. While there are some fantastic computing teachers with computer science qualifications, the reality is that most of the computing lessons taught anywhere on the planet are taught by a non-specialist teacher. That is even more so the case for anything related to AI. If we’re serious about AI literacy for young people, we have to get serious about AI literacy for teachers.

Rethinking computer science

Alongside introducing AI literacy, we also need to take a hard look at computer science. At the very least, we need to make sure that computer science curricula include machine learning models, explaining how they constitute a new paradigm for computing, and give more emphasis to the role that data will play in the future of computing. Adding anything new to an already packed computer science curriculum means tough choices about what to deprioritise to make space.

And, while we’re reviewing curricula, what about biology, geography, or any of the other subjects that are just as likely to be revolutionised by big data and AI? As part of Experience AI, we are launching some of the first lessons focusing on ecosystems and AI, which we think should be at the heart of any modern biology curriculum.

Some are saying young people don’t need to learn how to code. It’s an easy political soundbite, but it just doesn’t stand up to serious scrutiny.

There is already a lively debate about the extent to which the new generation of AI technologies will make programming as we know it obsolete. In January, the prestigious ACM journal ran an opinion piece from Matt Welsh, founder of an AI-powered programming start-up, in which he said: “I believe the conventional idea of ‘writing a program’ is headed for extinction, and indeed, for all but very specialised applications, most software, as we know it, will be replaced by AI systems that are trained rather than programmed.”

With GitHub (now part of Microsoft) claiming that their pair programming technology, Copilot, is now writing 46 percent of developers’ code, it’s perhaps not surprising that some are saying young people don’t need to learn how to code. It’s an easy political soundbite, but it just doesn’t stand up to serious scrutiny.

Even if AI systems can improve to the point where they generate consistently reliable code, it seems to me that it is just as likely that this will increase the demand for more complex software, leading to greater demand for more programmers. There is historical precedent for this: the invention of abstract programming languages such as Python dramatically simplified the act of humans providing instructions to computers, leading to more complex software and a much greater demand for developers.

However these AI-powered tools develop, it will still be essential for young people to learn the fundamentals of programming and to get hands-on experience of writing code as part of any credible computer science course. Practical experience of writing computer programs is an essential part of learning how to analyse problems in computational terms; it brings the subject to life; it will help young people understand how the world around them is being transformed by AI systems; and it will ensure that they are able to shape that future, rather than it being something that is done to them.

Enhancing teaching and learning through AI-powered technologies

Technology has already transformed learning. YouTube is probably the most important educational innovation of the past 20 years, democratising both the creation and consumption of learning resources. Khan Academy, meanwhile, integrated video instruction into a learning experience that gamified formative assessment. Our own edtech platform, Ada Computer Science, combines comprehensive instructional materials, a huge bank of questions designed to help learning, and automated marking and feedback to make computer science easier to teach and learn. Brilliant though these are, none of them have even begun to harness the potential of AI systems like large language models (LLMs).

The challenge for all of us working in education is how we ensure that ethics and privacy are at the centre of the development of [AI-powered edtech].

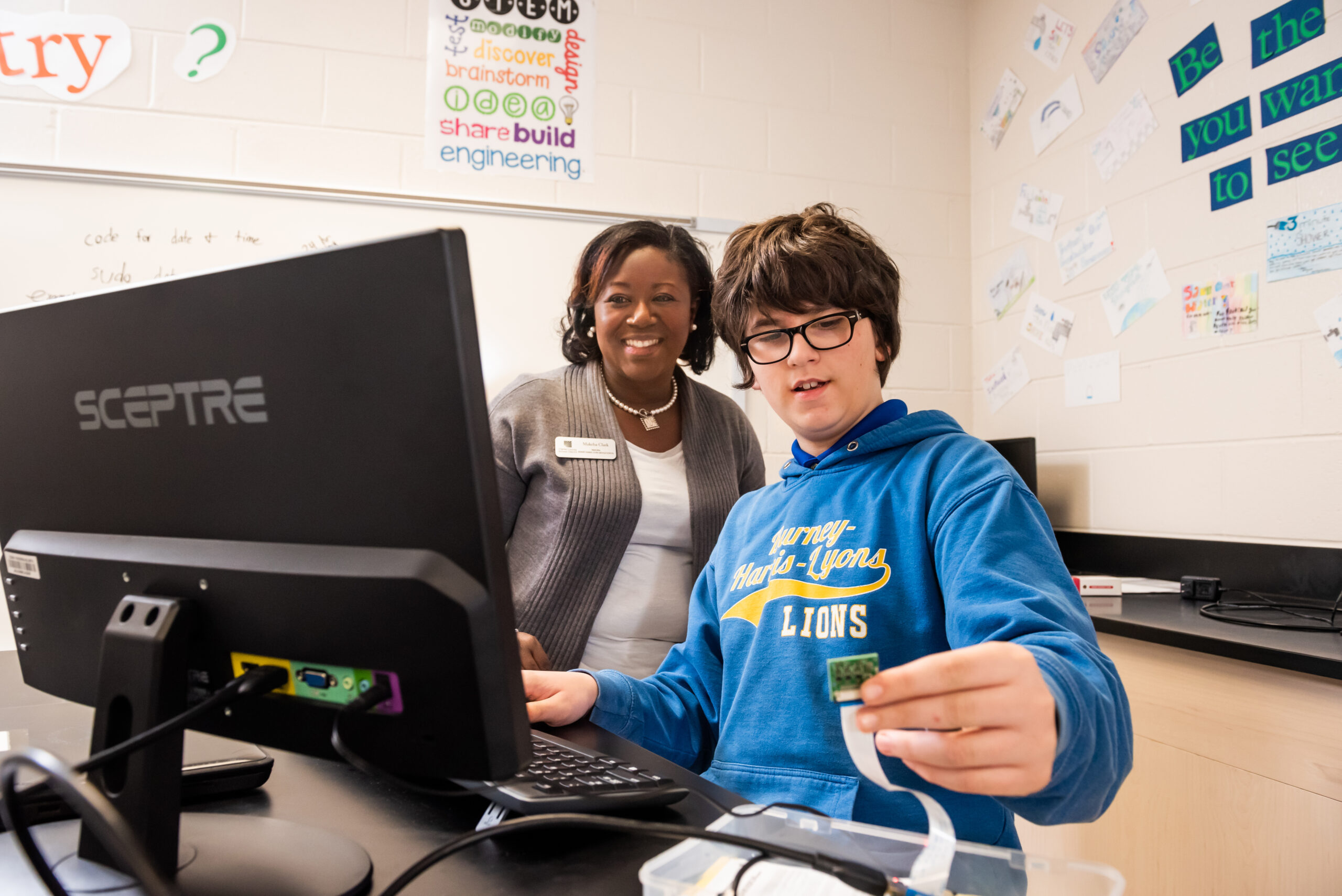

One area where I think we’ll see huge progress is feedback. It’s well-established that good-quality feedback makes a huge difference to learning, but a teacher’s ability to provide feedback is limited by their time. No one is seriously claiming that chatbots will replace teachers, but — if we can get the quality right — LLM applications could provide every child with unlimited, on-demand feedback. AI-powered feedback — not giving students the answers, but coaching, suggesting, and encouraging in the way that great teachers already do — could be transformational.

We are already seeing edtech companies racing to bring new products and features to market that leverage LLMs, and my prediction is that the pace of that innovation is going to increase exponentially over the coming years. The challenge for all of us working in education is how we ensure that ethics and privacy are at the centre of the development of these technologies. That’s important for all applications of AI, but especially so in education, where these systems will be unleashed directly on young people. How much data from students will an AI system need to access? Can that data — aggregated from millions of students — be used to train new models? How can we communicate transparently the limitations of the information provided back to students?

Ultimately, we need to think about how parents, teachers, and education systems (the purchasers of edtech products) will be able to make informed choices about what to put in front of students. Standards will have an important role to play here, and I think we should be exploring ideas such as an AI kitemark for edtech products that communicate whether they meet a set of standards around bias, transparency, and privacy.

Realising potential in a brave new world

We may very well be entering an era in which AI systems dramatically enhance the creativity and productivity of humanity as a species. Whether the reality lives up to the hype or not, AI systems are undoubtedly going to be a big part of all of our futures, and we urgently need to figure out what that means for education, and what skills, knowledge, and mindsets young people need to develop in order to realise their full potential in that brave new world.

That’s the work we’re engaged in at the Raspberry Pi Foundation, working in partnership with individuals and organisations from across industry, government, education, and civil society.

If you have ideas and want to get involved in shaping the future of computing education, we’d love to hear from you.

This article will also appear in issue 22 of Hello World magazine, which focuses on teaching and AI. We are publishing this new issue on Monday 23 October. Sign up for a free digital subscription to get the PDF straight to your inbox on the day.

No comments