-

A Code Club in every school and library

Let's give all young people the skills and confidence to thrive in the age of AI

-

Coolest Projects 2025: Where 11,980 young tech creators shared their ideas

11,980 young creators from 41 countries wowed with 5,952 tech projects at Coolest Projects 2025

-

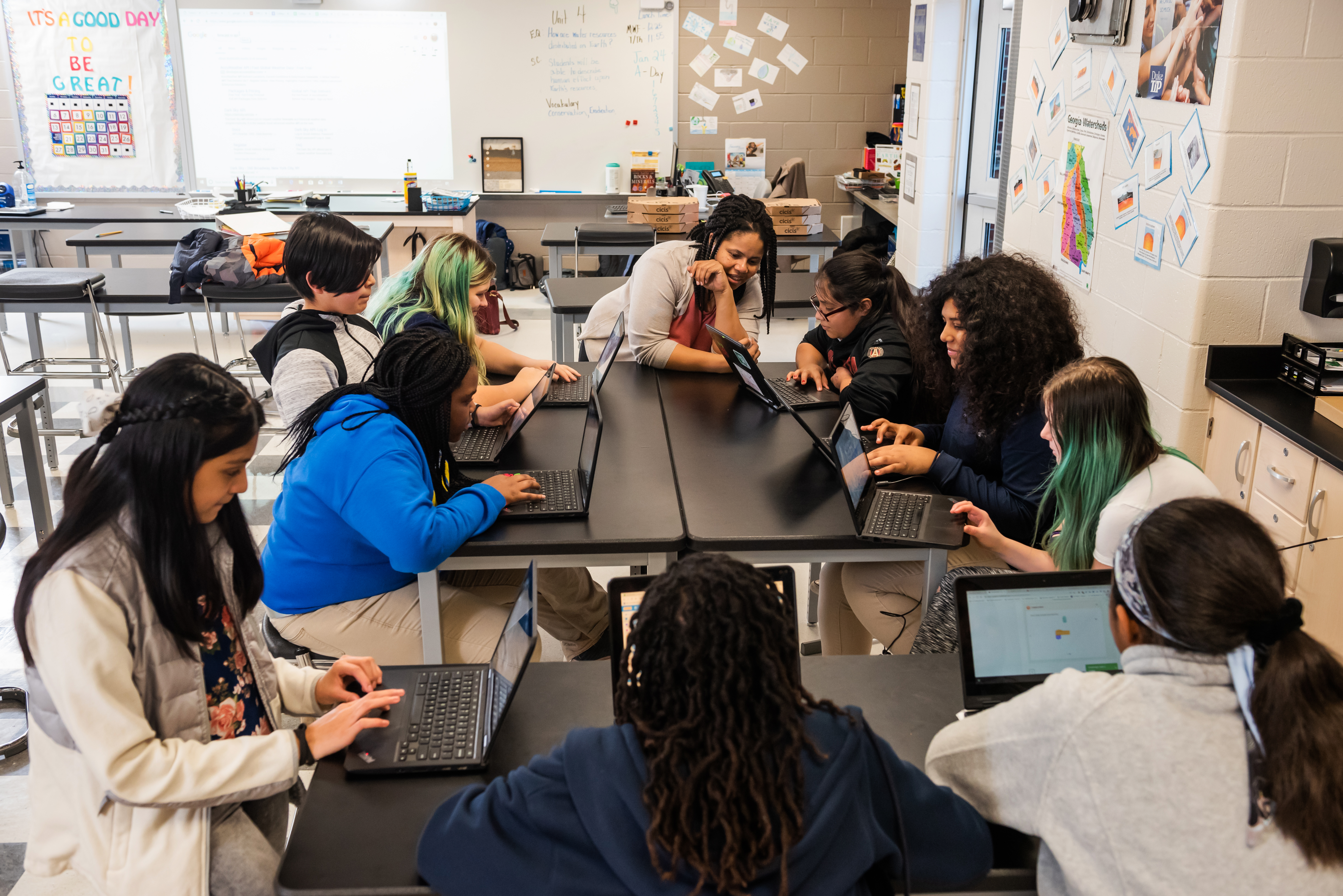

Experience CS: A free integrated curriculum for computer science

Six integrated computer science units are available to access, with more on the way

-

Adapting our computing curriculum resources for Kenya — the journey so far

Supporting teachers and students in Kenya with tailored computing lessons

-

Young creators on the move at Coolest Projects Belgium 2025

Young coders wow at Coolest Projects Belgium 2025 with creative tech from AI to robotics

-

From player to maker: Learn to code by creating your own game

Creating a game is one of the most exciting ways to explore coding

-

How to give your students structure as they learn programming skills

Teach coding with the 'levels of abstraction' framework to help learners with problem-solving

-

Igniting innovation: How Experience AI is empowering teachers and students across Kenya

The training sparked more than curiosity — it sparked a shift in mindset

-

Bringing data science to life for K–12 students with the ‘API Can Code’ curriculum

A student-designed curriculum that helps learners build real-world data science skills

-

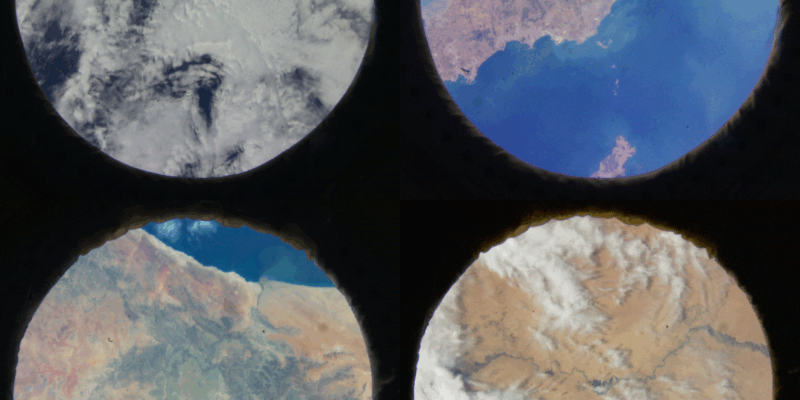

Astro Pi 2024/25: Another stellar year of space education concludes

Young people are now receiving their certificates and data from the ISS

-

Discover the incredible impact of Code Club: The Code Club annual survey report 2025

Discover how Code Clubs have helped young people build digital skills and confidence this year

-

Join our free data science education workshop for teachers

Sign up by 20 June to take part